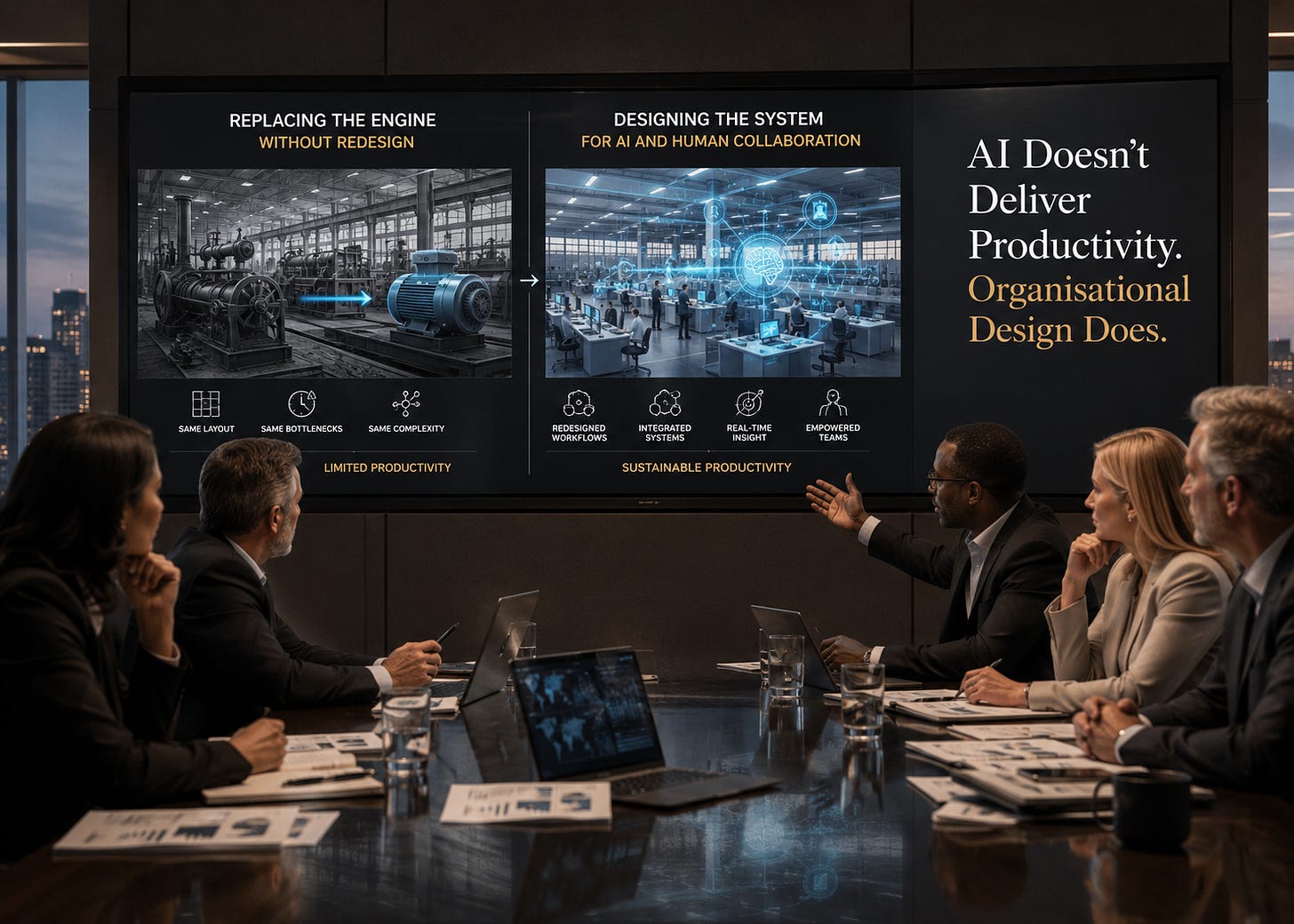

AI Doesn’t Deliver Productivity. Organisational Design Does

Edition 13 — Why boards should stop asking where to deploy AI and start asking what the organisation must redesign to capture value.

The AI productivity paradox is not a technology problem

Boardrooms have largely accepted that AI will become a foundational technology. Capital is flowing rapidly into models, infrastructure, copilots, automation platforms, and enterprise transformation programmes. Executives face mounting pressure from investors, competitors, regulators, and governments to demonstrate progress. Across sectors, organisations are announcing pilots, partnerships, and AI-enabled strategies at remarkable speed.

Yet broad-based productivity gains remain uneven.

This gap between investment and realised value should not be surprising. General-purpose technologies rarely transform productivity immediately because organisations initially deploy them into operating models designed for an earlier era. The history of electricity, enterprise computing, and digital transformation all point to the same pattern: technology adoption often accelerates faster than organisational adaptation.

AI appears to be following the same trajectory. Many organisations are increasing activity rather than productivity. They are producing more reports, more analyses, more dashboards, more customer interactions, and more generated content without materially reducing coordination costs or improving operating leverage. In some cases, AI is adding additional layers of review, escalation, and managerial oversight because organisations are attempting to graft AI onto fragmented legacy systems rather than redesigning work itself.

This distinction matters because productivity is not a measure of output volume. Productivity reflects an organisation’s ability to create more value with fewer resources, lower friction, better decisions, and faster coordination. Boards should therefore treat AI less as a technology deployment challenge and more as a question of organisational economics. The critical issue is not whether the organisation is using AI. It is whether the organisation is redesigning decision architecture in ways that improve performance.

Electricity shows why powerful technologies underperform at first

The history of electricity provides an instructive precedent (Note: I first wrote about this with reference to agile and digital transformations back in 2018, and again in my book, The 6 Enablers of Business Agility, published in 2021). When electricity became commercially viable in the late nineteenth century, industrialists expected rapid productivity gains. Many factories replaced steam engines with electric motors, anticipating immediate improvements in efficiency and output.

The gains did not arrive.

The problem was not the technology itself. Steam-powered factories had been designed around centralised mechanical power. A single engine drove interconnected shafts, belts, and gears that determined factory layout, workflow design, labour coordination, and production pacing. Electricity enabled distributed power, but most manufacturers initially preserved the existing factory architecture while simply changing the energy source.

Meaningful productivity gains emerged only when factories were redesigned around electricity itself. Production lines were reorganised. Buildings were redesigned. Labour practices evolved. Management assumptions changed. The technology became transformative only when organisations redesigned the operating system surrounding it.

This historical analogy matters because AI is not simply another software upgrade. It is a general-purpose technology capable of reshaping how organisations coordinate work, distribute expertise, exercise managerial control, and make decisions at scale. The lesson from electricity is therefore highly relevant: technology substitution rarely creates transformation on its own. Productivity gains emerge when organisations redesign themselves around the capabilities of the technology. It took 20 years to fully realise the productivity gains of electricity. When it comes to AI, many companies will be lucky to have 20 months.

Most organisations are installing AI into the steam-powered factory

Many organisations are now making the same mistake with AI. Rather than redesigning workflows and decision systems, they are layering AI onto existing structures. Copilots are added to legacy processes. Chatbots sit alongside fragmented service models. Automation tools coexist with duplicated reporting, manual approvals, disconnected systems, and multiple oversight layers.

This often yields local efficiency gains but fails to achieve enterprise-level productivity. Individual employees may complete tasks faster, but the organisation as a whole remains constrained by the same coordination costs, governance structures, and operational fragmentation. In some cases, complexity increases because organisations must manage both legacy workflows and AI-enabled processes simultaneously.

The result is a form of hybrid bureaucracy: human judgement, AI-generated outputs, legacy governance processes, manual controls, and additional review layers all operating together. This pattern is becoming increasingly common across large enterprises where AI adoption is progressing faster than operating model redesign.

This creates a strategic risk that boards should recognise early. Organisations can appear technologically progressive while their underlying operating economics remain largely unchanged. AI activity rises, yet decision latency, duplicated work, coordination friction, and management overhead persist. In some cases, organisations are scaling complexity faster than they are scaling productivity.

The distinction between AI-enabled work and AI-native operating models becomes important here. AI-enabled organisations add AI to existing workflows. AI-native organisations redesign workflows, escalation paths, accountability structures, and management systems to support AI-driven decision-making. The latter is where more significant productivity gains are likely to emerge.

AI is not just a tool; it is a system for scaling judgement

Electricity transformed how organisations powered work. AI is transforming how organisations define, coordinate, and govern work because it operates on judgement rather than physical power.

AI systems generate classifications, recommendations, predictions, summaries, prioritisation signals, and risk scores. Once embedded into dashboards, workflows, reporting structures, and operational processes, these outputs begin to shape managerial behaviour and organisational decision-making. AI therefore influences not only how work is performed, but how organisations determine what matters, what requires intervention, and what constitutes acceptable performance.

This is why AI should be understood as a redesign of decision architecture rather than merely a productivity tool. Decision architecture includes:

what decisions are made,

who makes them,

what information informs them,

how decisions propagate through the organisation, and

how accountability is exercised when decisions influence outcomes.

AI changes all five simultaneously.

This creates opportunities for substantial productivity gains, particularly in environments heavily dependent on information processing, classification, forecasting, coordination, or repetitive judgement. However, it also introduces governance complexity because AI-generated outputs increasingly shape operational and strategic decisions.

In practice, many organisations underestimate how quickly AI systems can begin to redefine organisational norms. Recommendation systems influence prioritisation. AI-generated KPIs shape managerial attention. Predictive systems alter escalation patterns and risk thresholds. Over time, organisations can quietly shift their definitions of quality, acceptable risk, and operational performance without explicit strategic discussion.

That is why boards should view AI governance as inseparable from operating model governance. AI is not simply changing how work is executed. It is changing how organisations exercise judgement at scale.

Productivity depends on complementary capability, not access to the model

One of the more persistent misconceptions in current AI discourse is the assumption that access to advanced models automatically reduces dependency on human expertise. The evidence suggests something more nuanced.

Research involving patent examiners using AI systems found that productivity gains depended heavily on domain expertise. Users with sufficient experience were able to interpret AI outputs effectively and incorporate them into higher-quality decision-making. Less experienced users struggled more because they lacked the contextual understanding needed to validate or challenge the system’s recommendations.

This has important implications for workforce strategy. AI does not eliminate the importance of expertise; it changes where expertise becomes most valuable. In many organisations, mid-level professionals may benefit most because they combine operational knowledge with a greater willingness to incorporate AI into workflows. Less experienced employees may over-trust AI outputs, while highly experienced specialists may resist systems they perceive as unreliable or insufficiently nuanced.

Boards should therefore approach workforce transformation more carefully than many current AI narratives suggest. Generic AI training programmes are unlikely to create substantial productivity gains on their own. Productivity emerges from complementary capability systems operating together:

domain expertise,

workflow design,

managerial oversight,

incentive structures,

data quality, and

AI literacy.

This helps explain why enterprise-wide AI productivity gains are proving harder to realise than many early forecasts implied. Technology alone rarely changes institutional performance. Organisations require supporting structures capable of converting AI-generated outputs into better operational decisions.

Lessons from manufacturing: automate last, not first

Tesla’s manufacturing struggles during the Model 3 production ramp offer a useful operational lesson for AI adoption. The company initially pursued extremely high levels of automation across production processes. Elon Musk later acknowledged that parts of the manufacturing system had become excessively complex and overly dependent on automation before the underlying workflows had been sufficiently simplified.

The deeper lesson was not that automation failed. It was that automation amplifies the quality of the underlying system design.

Musk’s manufacturing philosophy later evolved towards a more disciplined sequence:

challenge the requirement,

remove unnecessary process,

simplify the workflow,

accelerate throughput,

automate last.

Many organisations are currently approaching AI in the reverse order. Existing processes remain intact while AI tools, additional controls, governance reviews, and oversight mechanisms are layered on top. The result is often greater coordination complexity rather than materially improved productivity.

Boards should therefore evaluate AI proposals through an operating model lens rather than a purely technical one. Before approving automation initiatives, leadership teams should be able to explain:

which work is being removed,

which workflows are being redesigned,

how accountability changes, and

whether unnecessary process complexity has been eliminated first.

Otherwise, organisations risk scaling inefficiency rather than eliminating it.

Data architecture determines whether AI scales or stalls

AI amplifies organisational data quality in the same way electricity amplified factory design. Weak data governance, fragmented ownership structures, inconsistent definitions, and poor interoperability become more consequential as AI adoption expands.

Many organisations still operate with disconnected systems, duplicated records, inconsistent customer definitions, and limited visibility across functions. Under traditional operating models, these weaknesses create inefficiency. Under AI-enabled operating models, they become structural constraints on productivity, coordination, and decision quality.

This is why data architecture should not be treated as a technical housekeeping issue delegated entirely to IT functions. Data architecture is part of organisational design because it determines:

what the organisation can observe,

how reliably it can coordinate activity,

how consistently it can measure performance, and

how effectively AI systems can operate across workflows.

The commercial implications are significant. Financial institutions with fragmented customer data struggle to scale AI-driven personalisation or fraud detection consistently across channels. Healthcare providers often face interoperability barriers that limit the usefulness of AI-assisted diagnostics. Retailers investing heavily in recommendation engines frequently discover that inconsistent inventory, pricing, and customer data constrain performance long before model capability becomes the limiting factor.

The organisations most likely to realise sustained AI productivity gains will not necessarily be those deploying the most pilots. They will be those establishing reusable data foundations, interoperable systems, clear ownership models, and governance structures capable of supporting enterprise-wide decision consistency.

This has direct implications for capital allocation. Boards approving AI investments without addressing fragmented data architecture often fund local automation while leaving enterprise productivity constraints unresolved.

Responsible AI failures often come from poor organisational design

Many discussions of responsible AI focus primarily on principles such as fairness, transparency, accountability, explainability, and human oversight. These principles are important, but organisations frequently underestimate how operational design choices determine whether those principles can be implemented effectively.

Bias often originates in flawed data, inconsistent workflows, or poorly designed incentives rather than in the model itself. Accountability gaps emerge when AI influences decisions, but responsibility for outcomes remains unclear. Explainability challenges frequently reflect unresolved trade-offs between predictive performance, auditability, operational speed, and managerial control.

There is also a more subtle governance issue. AI-generated metrics, classifications, and performance signals can gradually reshape organisational behaviour by influencing what managers prioritise, how employees are evaluated, and what the organisation treats as success. Over time, AI systems can alter operational norms and risk tolerances without explicit strategic discussion.

Responsible AI and productivity, therefore, depend on many of the same underlying capabilities:

clear decision ownership,

strong data governance,

coherent workflows,

defined escalation paths, and

effective managerial oversight.

This is why responsible AI should not be treated as a separate governance agenda operating alongside productivity transformation. Both depend on the same organisational design choices.

Boards must govern the redesign, not just approve the technology

Boards add limited value by debating individual AI tools. Their primary responsibility is to ensure that AI investment is aligned with organisational redesign, strategic priorities, and value-creation governance.

This requires a different set of board-level questions. Instead of focusing narrowly on deployment activity, boards should examine whether leadership teams are redesigning the decision architecture in ways that improve productivity, responsiveness, control, and resilience.

That includes questions such as:

Which workflows are being fundamentally redesigned rather than merely automated?

Which processes are being removed before AI is introduced?

How will AI change managerial accountability and escalation?

What complementary capabilities are required for AI to improve judgement rather than weaken it?

Which KPIs or performance measures may become distorted by AI-generated outputs?

What evidence demonstrates genuine productivity improvement rather than increased operational activity?

How will AI reshape the organisation’s operating economics over time?

These are governance questions, not technical ones. They sit directly within the board’s responsibility to oversee strategy, capital allocation, organisational capability, risk, and long-term competitiveness.

The boards most likely to govern AI effectively will be those that recognise AI as an operating model transformation rather than a technology programme. That requires oversight not only of deployment activity, but of how the organisation redesigns work itself.

The lesson from electricity still holds

Electricity did not transform industrial productivity when organisations merely replaced steam engines with electric motors. It transformed productivity when factories, workflows, labour models, and management systems were redesigned around distributed power.

AI will follow the same pattern.

Organisations that simply layer AI onto legacy structures may achieve pockets of efficiency, but they are unlikely to achieve significant operating leverage or sustained competitive advantage. The larger gains will accrue to organisations willing to redesign their decision architecture, workforce capability, governance systems, data foundations, and performance management to align with the realities of AI-enabled work.

Over time, this is likely to create widening differences between firms that merely deploy AI and firms that redesign themselves around it. The former may achieve incremental efficiencies. The latter may develop structurally different operating economics: faster coordination, lower managerial overhead, better decision consistency, and greater scalability.

This is ultimately why AI should be treated as a strategic operating model transformation rather than a technology deployment programme.

The board-level implication is straightforward. AI productivity is not primarily a technology dividend. It is an organisational design dividend. The organisations that recognise this early will not necessarily deploy the most AI. They will build the most adaptive, coherent, and governable systems around it.

Subscribe to AI in the Boardroom for more board-level analysis on AI strategy, governance, and transformation.